Multicollinearity occurs when the features (or independent variables) are not independent of each other. Little or no multi-collinearity: It is assumed that there is little or no multicollinearity in the data.So, 1st figure will give better predictions using linear regression. As shown below, 1st figure represents linearly related variables whereas variables in the 2nd and 3rd figures are most likely non-linear. The linearity assumption can be tested using scatter plots. Linear relationship: Relationship between response and feature variables should be linear.Given below are the basic assumptions that a linear regression model makes regarding a dataset on which it is applied: The best possible score is 1.0, lower values are worse. Where y’ is the estimated target output, y the corresponding (correct) target output, and Var is Variance, the square of the standard deviation. X ( feature matrix) = a matrix of size n X p where x_ Multiple linear regression attempts to model the relationship between two or more features and a response by fitting a linear equation to the observed data.Ĭlearly, it is nothing but an extension of simple linear regression.Ĭonsider a dataset with p features(or independent variables) and one response(or dependent variable).Īlso, the dataset contains n rows/observations.

Note: The complete derivation for finding least squares estimates in simple linear regression can be found here.Ĭode: Python implementation of above technique on our small dataset Where SS_xy is the sum of cross-deviations of y and x:Īnd SS_xx is the sum of squared deviations of x: Without going into the mathematical details, we present the result here: We define the squared error or cost function, J as:Īnd our task is to find the value of b_0 and b_1 for which J(b_0,b_1) is minimum! So, our aim is to minimize the total residual error. Here, e_i is a residual error in ith observation. In this article, we are going to use the principle of Least Squares. And once we’ve estimated these coefficients, we can use the model to predict responses! To create our model, we must “learn” or estimate the values of regression coefficients b_0 and b_1.

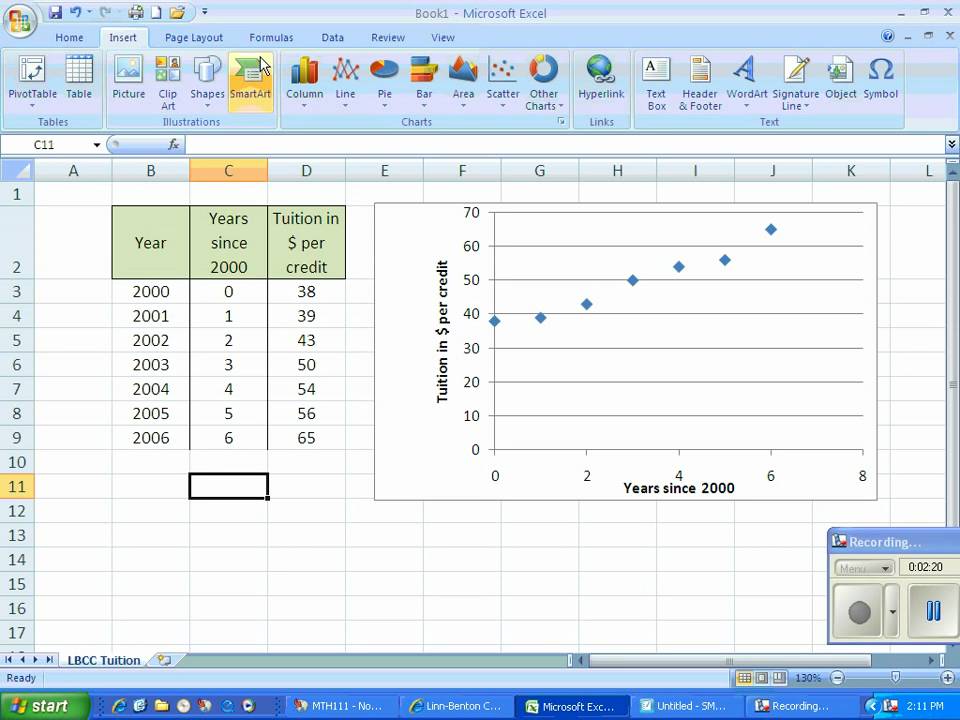

h(x_i) represents the predicted response value for i th observation.The equation of regression line is represented as: (i.e a value of x not present in a dataset) Now, the task is to find a line that fits best in the above scatter plot so that we can predict the response for any new feature values. Y as response vector, i.e y = įor n observations (in above example, n=10).Ī scatter plot of the above dataset looks like:. Decision Tree Introduction with example.Removing stop words with NLTK in Python.Regression and Classification | Supervised Machine Learning.Basic Concept of Classification (Data Mining).Gradient Descent algorithm and its variants.ML | Momentum-based Gradient Optimizer introduction.Optimization techniques for Gradient Descent.ML | Mini-Batch Gradient Descent with Python.Difference between Batch Gradient Descent and Stochastic Gradient Descent.Difference between Gradient descent and Normal equation.

Linear Regression (Python Implementation).ISRO CS Syllabus for Scientist/Engineer Exam.ISRO CS Original Papers and Official Keys.GATE CS Original Papers and Official Keys.